Some combinatorial graph problems that have an algebraic answer

all answers have something to do with eigenvalues

Number of triangles

Theorem. Let \(A \in \{0,1\}^{n \times n}\) be the binary adjacency matrix of an undirected graph $G$ with $n$ vertices and without self-loops. Then,

\[\boxed{\text{the number of triangles of } G \text{ is } \frac{1}{6}\text{tr}(A^3).}\]Proof. Note that \((A^3)_{ii} = \sum_{j,k=1}^n A_{ij}A_{jk}A_{ki}\). The number of nonzero summation terms is the same as twice the number of distinct $i,j,k,i$ paths (because each path is counted twice; i.e., $i,j,k,i$ and $i,k,j,i$ are the same triangle). Then, summing over all $i$, each triangle is counted six times (either starting from and returning to $i$, or $j$, or $k$). Thus, the total number of triangles is one sixth of $\sum_{i=1}^n(A^3)_{ii}$. $\square$

Remark. The trace is obviously related to the eigenvalues. Let \(\{\mu_i\}_{i=1}^n\) be the eigenvalues of $A$. Then,

\[\boxed{\text{the number of triangles of } G \text{ is } \frac{1}{6}\sum_{i=1}^n\mu_i^3.}\]Commute time

Theorem. Let \(A \in \mathbb{R}_{\ge0}^{n \times n}\) be the positively weighted adjacency matrix of an undirected, connected graph $G$ with $n$ vertices (self-loops allowed). Let $L = D - A$ be the graph Laplacian matrix with $D$ being the diagonal degree matrix; that is $D_{ii} = \sum_{j=1}^n A_{ij}$. Define the first passage time (or hitting time) $H_{ij}$ to be the the expected amount of time that a random walker starts from $i$ and reaches $j$ for the first time. Further, define the commute time between $i$ and $j$ to be $C_{ij} = H_{ij} + H_{ji}$. We have,

\[\boxed{C_{ij} = \text{vol}(G) ( L^+_{ii} + L^+_{jj} - 2L^+_{ij} ) = \text{vol}(G) (e_i - e_j)^T L^+ (e_i - e_j),}\]where $\text{vol}(G) = \sum_{i=1}^n D_{ii}$ is the sum of degrees, $e_i$ is the $i$-th column of the identity matrix, and $L^+$ is the pseudo-inverse of $L$.

Proof. Consider the hitting time $H_{ij}$ where $j \ne i$. Starting from $i$, the random walker either directly lands on $j$ in one step, or transitions to any other $k$ in one step and then takes $H_{kj}$ time to land on $j$. Therefore,

\[H_{ij} = P_{ij} \cdot 1 + \sum_{k \ne j} P_{ik} (1 + H_{kj}).\]This scalar equation can be reformulated into the matrix equation

\[M = \mathbf{1}\mathbf{1}^T + P(M - M_d),\]where $M$ is a matrix that contains the elements $M_{ij} = H_{ij}$ except the diagonal. The diagonal, denoted by $M_d$, can be figured out by multiplying the stationary distribution vector $\pi^T = \mathbf{1}^T D / \text{vol}(G)$ to the left of both sides of the above matrix equation. Because $\pi^T P = \pi^T$, we obtain $\text{diag}(M_d) = \text{vol}(G) D^{-1} \textbf{1}$. Then, by noting that the matrix of hitting times, $H$, is equal to $M - M_d$, the above matrix equation is equivalent to the following:

\[H + \text{vol}(G) D^{-1} = \mathbf{1}\mathbf{1}^T + PH.\]Rearranging the terms and noting that $L = D(I-P)$, we obtain

\[LH = D\mathbf{1}\mathbf{1}^T - \text{vol}(G) I.\]Then, multiplying $L^+$ to the left, we have

\[(L^+L)H = L^+D\mathbf{1}\mathbf{1}^T - \text{vol}(G) L^+.\]Because the graph is connected, the null space of $L^+L$ is 1-dimensional and is spanned by the vector of all ones. Thus, $H$ must be of the form

\[H = L^+D\mathbf{1}\mathbf{1}^T - \text{vol}(G) L^+ + \mathbf{1} u^T\]for some column vector $u$. The way to figure out $u$ is that $H$ has an empty diagonal. Thus, $u$ must be $\text{vol}(G) \gamma - L^+D\mathbf{1}$, where $\gamma$ is a column vector whose elements are the diagonal elements of $L^+$. This leads to

\[H = L^+D\mathbf{1}\mathbf{1}^T - \mathbf{1}\mathbf{1}^TDL^+ + \text{vol}(G) (\mathbf{1} \gamma^T - L^+).\]Writing $C = H + H^T$ we conclude the proof. $\square$

Remark. The total commute time of the graph, defined as $C = \sum_{i < j} C_{ij}$, turns out to be a trace:

\[\sum_{i < j} C_{ij} = \frac{1}{2}\sum_{i,j=1}^n C_{ij} = \frac{1}{2}\text{vol}(G)(2n\text{tr}(L^+) - 2\textbf{1}^TL^+\textbf{1}) = \text{vol}(G)n\text{tr}(L^+).\]Therefore, if we let $0 = \lambda_1 < \lambda_2 < \cdots < \lambda_n$ be the eigenvalues of $L$, the total commute time

\[\boxed{C = \text{vol}(G)n\sum_{i=2}^n \frac{1}{\lambda_i}.}\]Number of spanning trees

The result that we are going to show is known as the Kirchhoff’s theorem or the matrix-tree theorem. This is a very beautiful result, which connects spanning trees with a determinant. An important insight into this result is the incidence matrix of the graph, which bridges the two concepts. The following lemma reveals the prerequisite insight.

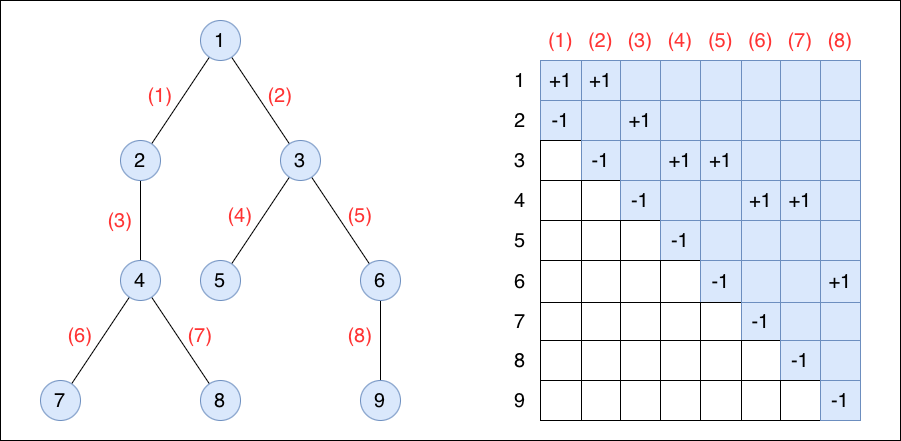

Lemma. Let \(B \in \{0, \pm1\}^{n \times (n-1)}\) be the incidence matrix of an undirected tree $T$ with $n$ vertices and $n-1$ edges. There exists an ordering of the vertices and edges such that $B$ is upper Hessenberg.

Proof. A visualization like the figure above makes the proof of this lemma and that of the subsequent theorem more intuitive.

Pick an arbitrary vertex of $T$ as the root. Label it $1$. Perform a breadth-first search on $T$ from vertex $1$. Vertices are labeled incrementally from $1$ based on the order of their visits during the search. Then, for each edge connecting $i$ and $j$ with $i < j$, label the edge as $(j-1)$. Thus, for every $j$, the $(j-1)$-th column of $B$ will have a $+1$ on row $i$ and a $-1$ on row $j$, making $B$ upper Hessenberg. $\square$

Theorem. Let \(A \in \{0,1\}^{n \times n}\) be the binary adjacency matrix of an undirected graph $G$ with $n$ vertices and without self-loops. Let $L$ be the $n \times n$ graph Laplacian matrix and let $L^{(k)}$ denote the $(n-1) \times (n-1)$ principal submatrix matrix resulting from having the $k$-th row and column of $L$ removed.

\[\boxed{\text{The number of spanning trees of } G \text{ is } \det(L^{(k)}) \text{ for any } k.}\]Proof. Let \(B \in \{0, \pm1\}^{n \times m}\) be the incidence matrix of $G$ with $n$ vertices and $m$ edges. Without loss of generality, we pick $k=1$; that is, we are going to count the number of trees rooted at vertex $1$. The rows of $B$ are ordered such that the first row corresponds to this vertex. Other rows are arbitrarily ordered for the moment and the columns of $B$ are arbitrarily ordered as well.

Let us use $S$ to denote an index set of size $n-1$ (that is, $S \subset [m]$ while $|S|=n-1$), which specifies $n-1$ randomly selected edges of the graph. We use the notation $\widetilde{B}_S$ to mean an $(n-1)\times(n-1)$ submatrix of $B$, which contains the $n-1$ selected columns of $B$ according to $S$ but with the first row removed (conceptually, this means removing vertex $1$). Based on the above lemma, if the $n-1$ selected edges form a spanning tree, we can order these edges and the $n-1$ unremoved vertices such that $\widetilde{B}_S$ is upper triangular with the diagonal elements all being $-1$. Consequently, $\det(\widetilde{B}_S\widetilde{B}_S^T) = 1$.

On the other hand, if the $n-1$ selected edges do not form a spanning tree, then either (a) at least one of the $n-1$ unremoved vertices is a singleton, or (b) there is a loop among the unremoved vertices. For case (a), the corresponding row of $\widetilde{B}_S$ is empty. For case (b), one can orient the edges with directions to form a directed loop and give $+1$ and $-1$ to the tail and the head of each directed edge, respectively. In this case, we can see that the columns of $\widetilde{B}_S$ sum to zero. In both cases, $\det(\widetilde{B}_S\widetilde{B}_S^T) = 0$.

Based on the above analysis, we can conclude that

\[\sum_{S \subset [m], \, |S|=n-1} \det(\widetilde{B}_S\widetilde{B}_S^T)\]exactly counts the number of spanning trees. The Cauchy–Binet formula points out that this sum is nothing but $\det(\widetilde{B}\widetilde{B}^T)$, where $\widetilde{B}$ is $B$ with the first row removed. Because $\widetilde{B}\widetilde{B}^T = L^{(1)}$, the sum is equal to $\det(L^{(1)})$.

As stated earlier, the choice of $k=1$ is without loss of generality. The theorem holds for any $k$. $\square$

Corollary. Let $0 = \lambda_1 < \lambda_2 < \cdots < \lambda_n$ be the eigenvalues of $L$. Then,

\[\boxed{\text{the number of spanning trees of } G \text{ is } \frac{1}{n}\prod_{i=2}^n \lambda_i.}\]Proof. Without loss of generality, we remove the last column and row of $L$ when computing the determinant $\det(L^{(k)})$. We partition the spectral decomposition of $L$ accordingly:

\[L = \begin{bmatrix} U & \frac{1}{\sqrt{n}}\mathbf{1} \\ u^T & \frac{1}{\sqrt{n}} \end{bmatrix} \begin{bmatrix} \Lambda & 0 \\ 0 & 0 \end{bmatrix} \begin{bmatrix} U^T & u \\ \frac{1}{\sqrt{n}}\mathbf{1}^T & \frac{1}{\sqrt{n}} \end{bmatrix},\]where $\Lambda$ contains all the nonzero eigenvalues of $L$. Removing the last row and column of $L$ results in $L^{(n)} = U \Lambda U^T$. Therefore,

\[\det(L^{(n)}) = \det(U \Lambda U^T) = \det(\Lambda)\det(U^TU) = \det(U^TU) \prod_{i=2}^n \lambda_i.\]It remains to compute $\det(U^TU)$. By the orthonormality of the eigenvectors, we have $U^TU + uu^T = I_{n-1}$ and $u^Tu = (n-1)/n$. Then, $U^TU = I_{n-1} - uu^T$. This matrix has a spectral component $uu^T$ with magnitude (eigenvalue) $1/n$, while the orthogonal component with magnitude (eigenvalue) $1$ in all basis directions. Overall, for $U^TU$, there are $n-1$ eigenvalues equal to $1$ and one eigenvalue equal to $1/n$. Thus,

\[\det(U^TU) = \frac{1}{n},\]completing the proof. $\square$

A bonus, fun fact. The earlier theorem holds for any $k$, which implies that $\det(L^{(k)})$ is equal to each other among all $k$. Here, we prove this fact differently.

Proof. By the basic property of determinants,

\[L \,\, \text{adj}(L) = \det(L) \, I,\]where $\text{adj}(L)$ is the adjugate of $L$. In particular, the $(k,k)$ entry of $\text{adj}(L)$ is $\det(L^{(k)})$.

Because $\det(L) = 0$, the symmetric $\text{adj}(L)$ must be some nonzero multiple of $\mathbf{1}\mathbf{1}^T$. Hence, $\text{adj}(L)$ is a matrix whose entries are equal to each other. In particular, the diagonal is a constant, which makes $\det(L^{(k)})$ equal to each other among all $k$. $\square$

Enjoy Reading This Article?

Here are some more articles you might like to read next: